- When a developer checked in code that did not break the build they got 10 points, and lost 10 if they broke it.

- Adding a unit test to a sucessful build got an additional point.

However, the sql server warehouse does. This is called tfs_warehouse and has a number of useful fact and dimension tables.

This is a good reference from Microsoft for the relational version of the data warehouse.

My approach to creating this report was as follows:

- Create query(s) and or view(s) that will produce the leaderboard as a result set. For simplicity I am sticking to the two criteria described above.

- Create an SSRS report based on the query created in 1.

- Publish this report so it is accessible from TFS project dashboards.

SELECT dper.Name

,det.BuildSK BuildSK

,CASE BuildStatusSK WHEN 3 THEN 1 ELSE NULL END Succeeded

,CASE BuildStatusSK WHEN 2 THEN 1 WHEN 1 THEN 1 ELSE NULL END Failed

FROM dbo.factbuildchangeset chg

INNER JOIN dbo.factbuilddetails det ON det.buildsk = chg.buildsk

INNER JOIN dbo.DimChangeset dchg ON dchg.ChangesetSK = chg.ChangesetSK

INNER JOIN dbo.DimPerson dper ON dper.PersonSK = dchg.checkedinbysk INNER JOIN dbo.dimbuild dbuild on det.buildsk = dbuild.buildsk

WHERE BuildType = 'Individual Continuous Integration'

This joins a number of tables together so that it can be determined who checked-in each changeset and what the result of this checkin was. I use a CASE statement to convert a build status value into a Succeeded or Failed field that can then be summed to support the scoring for the leader board. The WHERE clause ensures that we only score check-ins done a part of the continuous build process as we are only interested as builds that run as the result of a check-in, otherwise people could gain points just by re-running the same successful build again and again.

To get the number of unit tests added I created a couple of views directly in the TFS warehouse but I added them to my own schema called custom:

1. This creates a list by ascending build of the number of unit tests present in that build.

CREATE VIEW [custom].[vwTestRunsByProjectAndBuild] AS

SELECT

tr.TeamProjectSK

,tr.BuildSK,COUNT(tr.BuildSK) testcount,

ROW_NUMBER() OVER (ORDER BY tr.TeamProjectSK, tr.BuildSK) ordinal

from facttestresult tr

GROUP BY tr.TeamProjectSK, tr.BuildSK

2. To find the number of tests added/taken-way per build and with Sql Server lacking lag and lead type functions, this does a self join of the previous view using the ordinal value.

CREATE VIEW [custom].[vwTestsAddedByProjectAndBuild] AS

WITH T2 AS

(

select * from custom.vwTestRunsByProjectAndBuild

)

select T1.TeamProjectSK, T1.BuildSK BuildSK,

TestsAdded = CASE WHEN IsNull(T1.testcount- T2.testcount, T1.TestCount) > 0 THEN IsNull(T1.testcount- T2.testcount, T1.TestCount)

ELSE 0 END,

T1.TestCount T1TestCount,

T2.TestCount T2TestCount

FROM

(

SELECT * FROM custom.vwTestRunsByProjectAndBuild

) T1

LEFT JOIN T2 ON T1.ordinal - 1 = T2.ordinal

3 Putting these together gives this query which generates the list that will be used in the final report.

SELECT x.ProgrammerName, SUM(x.POINTS) "Points"

FROM

(

SELECT fact.Name ProgrammerName,

COUNT(fact.Succeeded) * 10 - Count(fact.Failed) * 10

+ CASE COUNT(fact.Failed) WHEN 0 THEN

(

SELECT SUM(TestsAdded) FROM custom.vwTestsAddedByProjectAndBuild vta

WHERE vta.BuildSK = fact.BuildSK

)

ELSE 0 END

AS "Points"

FROM

(

SELECT dper.Name,

det.BuildSK BuildSK,

CASE BuildStatusSK WHEN 3 THEN 1 ELSE NULL END Succeeded,

CASE BuildStatusSK WHEN 2 THEN 1 WHEN 1 THEN 1 ELSE NULL END Failed

FROM factbuildchangeset chg

INNER JOIN factbuilddetails det ON det.buildsk = chg.buildsk

INNER JOIN dbo.DimChangeset dchg ON dchg.ChangesetSK = chg.ChangesetSK

INNER JOIN dbo.DimPerson dper ON dper.PersonSK = dchg.checkedinbysk

INNER JOIN dbo.dimbuild dbuild on det.buildsk = dbuild.buildsk

WHERE BuildType = 'Individual Continuous Integration'

) fact

GROUP BY fact.Name, fact.BuildSK

) x

GROUP BY x.ProgrammerName

ORDER by "Points" DESC

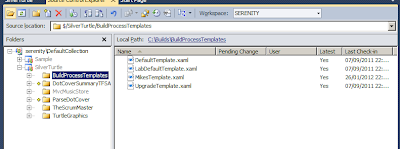

I then created a report using BI Studio 2008 as follows (note a zip file containing this project can be found here)

Once this report is up and running the final thing is to publish it to the TFS Reporting Services site. On my machine this is at http://dell/ReportServer_TFS. I've added it to the following folder TFSReports/DefaultCollection/SilverTurtle/Builds. This is in the Builds folder of the project I am working on in my Default Collection.

In actual fact it probably should go at a higher level as it is a leader board of all developers working on that particular TFS server. I imagine it would be relatively easy to constrain the data to report by project or collection if required.

I imagine there are a number of metrics that could be used for the report. Unit tests added is probably not the best metric to use, for example if you delete all the tests and put them back you get a stack load of points. For a future enhancement I am considering code coverage which is tracked in the Warehouse model. If you improve the code coverage you get points if you decrease it you lose points.

On my setup my report looks like this, there are only a few users set-up on my local system but it illustrates the principle: